Why so many smart people feel exhausted and can’t quite explain why.

You’re doing what you’re supposed to do.

You’re writing about policies. Updating guidelines. Adding disclaimers. Posting about “ethical and responsible AI.”

Maybe you work in Tech. Maybe you advise companies. Maybe you don’t touch AI at all, and you just want to know whether your job, your stability, and your future still makes sense.

And yet, none of this feels like it’s actually steering anything.

That’s because it isn’t.

Your job is not disappearing; it’s being negotiated.

And while you’re doing this? A Silicon Valley billionaire you’ve never heard of just spent $8 million to kill the AI safety law your state tried to pass.

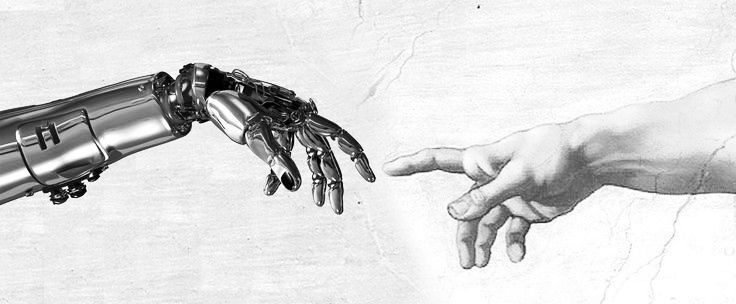

You think you’re building guardrails. They’re building the road.

The Real Fear Nobody’s Saying Out Loud

“I’m just over the instability of tech. It’s demoralizing.”

That’s not an X comment. That’s every conversation I’ve had with policy professionals in the last six months. You’re not just tired of learning new tools. You’re exhausted from the whiplash of trying to regulate something that rewrites itself faster than you can draft the memo.

“I’m holding onto my job for dear life and paying off debt so if I have to pivot I can take a pay cut. Very uncertain times. Super stressful.”

This is the part nobody puts in the compliance webinar. The actual fear isn’t about AI models getting smarter. It’s about your job becoming obsolete while you’re still trying to understand it. It’s about waking up and realizing the institution you’ve been protecting doesn’t exist anymore, and the people with power never cared if it did.

$100 Million Buys a Lot of “Centrism”

Here’s how it actually works:

In 2025, Silicon Valley investors launched a super-PAC network called Leading the Future, seeded with more than $100 million from Andreessen Horowitz, OpenAI president Greg Brockman, and other AI backers. Its mandate is explicit: use campaign donations and digital ads in the 2026 midterms to advocate against strict AI rules, promote a “uniform national approach to AI,” and “aggressively oppose” candidates who threaten the industry’s agenda.

The playbook? Fairshake, the crypto super PAC that spent $130 million in 2024 and successfully lobbied for friendlier federal regulations. Same strategy. Same lobbyists. Different acronym.

This isn’t lobbying. This is pre-selecting which lawmakers will even be in the room when AI “safety” laws are written.

How to Ghost-Write Regulation and Call It Consensus

Here’s the genius of it: they don’t argue against regulation. They argue for reasonable regulation.

They position themselves as the rational center. On one side: hysterical over-regulation that will “stifle innovation” and “hand China the win.” On the other: reckless accelerationism. And in the middle? Their framework. The one written by the same people funding the super PAC.

“AI centrism” isn’t a compromise. It’s a rebrand of deregulation.

They don’t need to convince you that regulation is bad. They just need to convince you that their version of regulation is inevitable. And when you’re exhausted, demoralized, and underwater with compliance requests, “inevitable” starts to sound a lot like “reasonable.”

Your Job Description Just Changed (And Nobody Told You)

“Places like Google, Meta, and Microsoft are always looking for people who can translate complex policy stuff into executive-level messaging.”

This is it. This is the skill that actually matters.

You’re not building AI policy. You’re translating billion-dollar lobbying strategies into language that sounds like it came from your compliance department. You’re the messenger. And the message was written before you got hired.

“Are you guys giving any policy/guidance or letting people do whatever they want? I think it’s hard to enforce. I’m thinking of adding some notes in our policy stating don’t put any personal identifiable information.”

This is the actual conversation happening in enterprise IT right now. Not “how do we build ethical AI systems. “But” how do we write a memo that looks like we tried, so when this blows up, we’re not liable”.

Why They’re Terrified of the EU AI Act

“We are blocking all AI, and unblocking them based upon their compliance with the regulations in the EU AI Act. We are also implementing solutions internally that are based upon publicly available services.”

Notice what’s different? They’re not asking permission. They’re not waiting for a “uniform national approach.” They’re setting the standard and making vendors comply with it.

This is what actual AI governance looks like. And this is exactly why Silicon Valley is spending nine figures to make sure it doesn’t happen in the US. Because the moment you have enforceable standards, the game changes. Suddenly, “move fast and break things” becomes “move carefully or pay fines.”

Institutions Don’t Fail. They Get Purchased.

“The easy solution is to make institutions resilient through decentralized decision making. Very large institutions are vulnerable to information asymmetry and speed.”

Except here’s the problem: decentralization requires infrastructure. Local control requires local funding. And when billionaires can spend more on a single PAC than your entire state’s election budget, “resilient institutions” is a nice idea with no leverage.

“It’s not about institutions but about people, always is. If there are enough people ‘believing’ something, they will make it happen.”

Beautiful sentiment. Ignores the part where $100 million in targeted ads can change what people believe before they even know they’re being influenced.

The Only AI Policy That Matters Right Now

Stop writing policies that protect the institution. Start building skills that protect you.

Because here’s what’s actually happening:

1. The policy fight is already over. The money won. Federal preemption will kill state-level AI safety laws, and the “uniform national approach” will be written by the same people funding the super PACs.

2. Your compliance framework is theater. Unless you work for a company that’s willing to say “no” to billion-dollar vendors, your AI policy is a liability shield, not a guardrail.

3. The skill that matters is translation. Not policy design. Not ethics consulting. The ability to take complex technical and regulatory concepts and turn them into clear, executable guidance that executives will actually read and investors will actually fund.

So What Now?

You have three options:

Option 1: Keep writing AI policies that nobody will enforce, for institutions that are already being dismantled by people with more money than your entire org’s annual budget.

Option 2: Learn the actual game. Understand how policy is really written (by lobbyists), how regulation is really shaped (by super PACs), and how corporate compliance really works (as liability management, not safety).

Option 3: Build the translation skill that makes you indispensable. Become the person who can take a 200-page EU AI Act assessment and turn it into a two-page exec brief that actually gets read. Learn to speak the language of risk, compliance, and strategic positioning fluently enough that you can translate between technical teams, legal, and C-suite without losing the plot.

Because here’s the truth they don’t tell you in the compliance training:

AI policy won’t save your job. But the ability to translate chaos into clarity might.

The billionaires already know this. That’s why they’re not fighting regulation—they’re writing it. They’re not blocking AI—they’re building the language that makes their version of AI inevitable.

You can keep caring about AI policy. Just know that the people writing it stopped caring about you a long time ago.

—

The question isn’t whether you should care about AI policy. The question is whether you’re going to keep pretending the fight is about ethics, or whether you’re ready to learn how power actually works.

Write to Casandra Moreno at casandra@thelawsofai.com

Leave a Reply